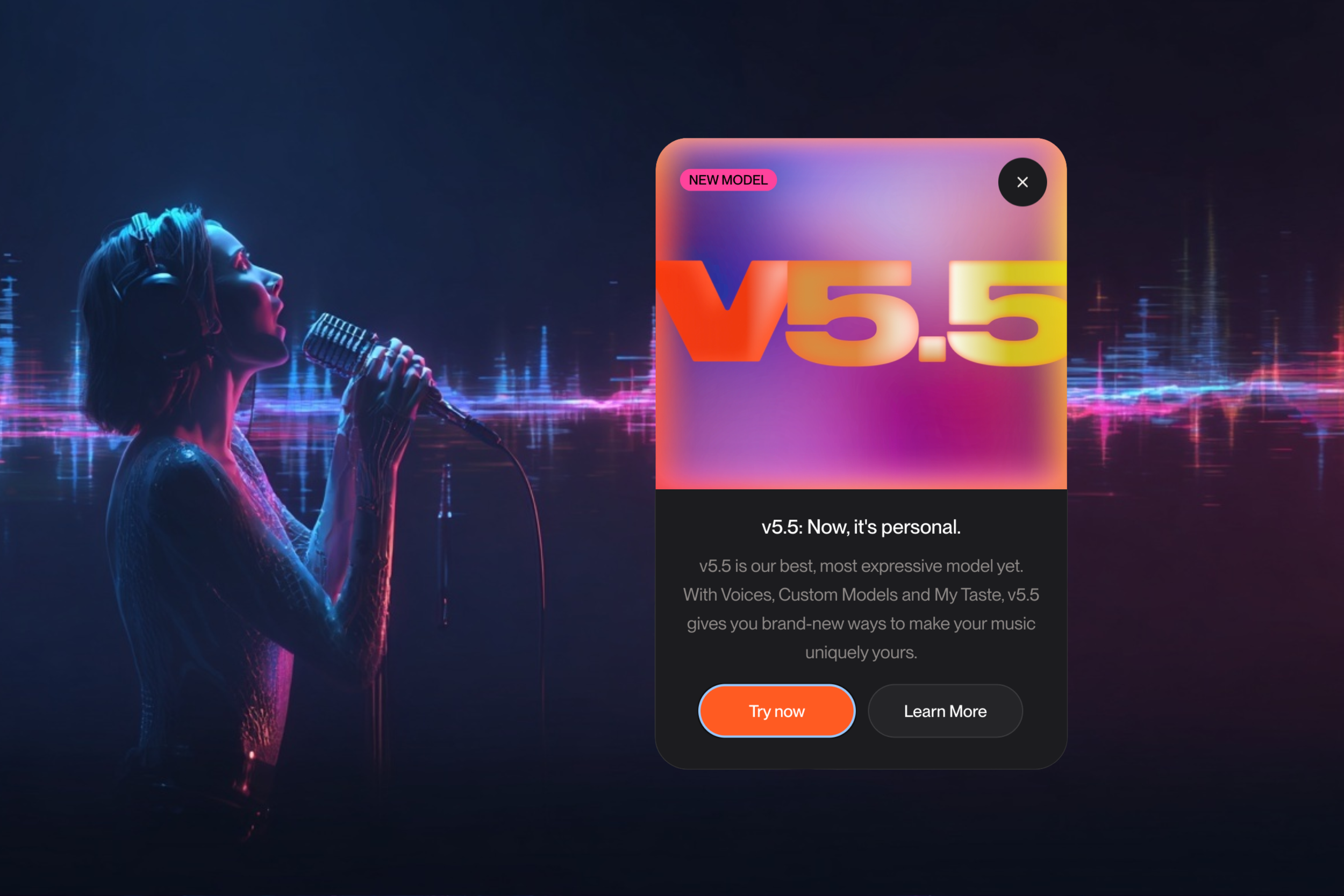

Use Your Voice & Train a Model Yourself with Suno v5.5

Latest version pushes AI music toward identity with voices, custom models, and taste training.

Suno just rolled out v5.5, and the direction is clear: personalization is becoming the core layer of AI music creation. The update introduces three new features—Voices, Custom Models, and My Taste — each designed to make outputs feel less generic and more tied to the creator behind them.

Here’s the official announcement video:

Voices is the headline move. Users can now record or upload their own voice and use it directly in generated songs, effectively turning Suno into a lightweight vocal instrument. The barrier to entry is low — no studio chain, no complex setup — and during beta, generations cost just 4 credits. It’s a direct response to growing demand for identity-driven outputs, where creators want songs that don’t just sound good, but sound like them.

Custom Models extend that idea further. By uploading at least six of an artist’s own tracks, users can train a personalized version of Suno that reflects their style and musical instincts. Instead of prompting into a generic system, creators are shaping the system itself, nudging AI music closer to a model where taste and authorship are embedded upstream.

My Taste adds a passive layer. The system learns from a user’s habits (genres, moods, references) and applies that data when generating new music via the Styles field. Over time, Suno begins to mirror a creator’s preferences without explicit input.

There are also structural changes. “Personas” has been rebranded as Voices, with prior functionality intact. Voices and Custom Models are currently limited to Pro and Premier subscribers, while My Taste is available to all users. One key caveat: while voices are private by default, sharing songs with remix or cover enabled may allow others to generate using that voice.

Suno previously announced that, following its settlement deal with Warner Music Group, it would be retiring its old models. With the release of v5.5, there’s still a lot of ambiguity and confusion surrounding Suno’s timeline and intentions.

The signal: AI music is moving from generation to identity. Tools aren’t just producing tracks — they’re learning who the creator is, and building systems around that.